INTRODUCTION

Video content from the social network site YouTube are a primary source of information in the health field. As a social network site, from its founding in 2005, any registered user can publish videos in YouTube when the community rules are satisfied.1,2,3.4,5) Published videos on YouTube have a lack of publishing control, and there is a lack of control about the type of content being published. There are controls about copyrighted videos or sound but not about the quality of the content or the type of content itself. Moreover, video keywords and metadata have an uncontrolled vocabulary. Retrieving content requires one to consider social tagging because of that uncontrolled vocabulary.

In the scientific literature of the health field, the information quality inside the videos is analysed in a very different way, as is the interaction with the videos. In analysing quality, not much attention is given to the retrieval and search design strategies. Moreover, different methodologies exist on analysing video content. On one hand, there can be quantitative analysis into the video content evaluation where it is possible to find a wide range of methodologies. On the other hand, there are qualitative analysis studies using sentiment analysis techniques based on comment analysis or interaction with the videos of the platform.

There are different issues regarding video consumption in YouTube. One issue is user behaviour in searching for videos. Searches done by patients are very different from those done by physicians with health knowledge.2 Therefore, social tagging makes video retrieval difficult with the consequence of retrieving biased or misleading information.

However, there are several reasons to publish videos on YouTube in the health field. For example, videos are published to provide resources for instructors in health education,3 additional information to educate health students or educate patients to do some preventive actions4) or to report about certain diseases.5) Also, videos are published to provide training for future physicians in surgical techniques6 even when space to teach is reduced.

Other literature reviews suggest that video searching should use a snowball technique,7 or they point out that video quality on YouTube to educate patients or future physicians is underdeveloped.8) There is a need to develop better algorithms that provide better retrieval results,9) and there are not big differences between video content analysis methods.10) In this literature review, two questions that consistently appear in the scientific literature are faced.

The first question concerns problems with search design and video retrieval, as no controlled vocabulary exists on YouTube. This question generates many issues on retrieval of information and content reliability. YouTube retrieval methodologies are diverse. First, it is possible to retrieve videos through manual search. This fact produces algorithm dependencies with biased retrieval. Second, it is possible to use the YouTube Application Program Interface (API) to retrieve videos, where dependencies on the algorithm are eliminated from search strategies. Finally, it is possible to use data mining strategies to retrieve videos and their metadata. When these methodologies are used, not only must algorithm dependencies be considered but social tagging as well. Because YouTube permits its users the use of an uncontrolled vocabulary, this generates biased video retrieval.

The second question concerns different methodologies that analyse the quality of videos with a quantitative approach. These methodologies are not standardised and are diverse. This can cause future researchers’ confusion about methodology with the possibility of obtaining biased results.

In scientific literature different methodologies are reported related to information quality analysis for YouTube videos related to the health field. The result of these methodologies permits to decide whether quality information contained in videos can serve in patient and medical students’ education about diseases or surgical techniques. These methodologies also serve to decide whether videos are suitable to be used by health professionals and students. Additionally, is possible to find different strategies on video retrieval used by health researchers. These strategies include the information search design and strategies related to keyword selection. The goal of this paper is to study these procedures found in the scientific literature.

This paper is a systematised literature review of YouTube research in health with the aim of identify the different keyword search strategies, retrieval strategies and scoring systems to assess video content.

METHODS

This research involves a systematic literature review. In this study, 176a peer-reviewed papers were analyzed (11) using descriptive statistics and qualitative paper analysis. Frequencies and percentages were computed for the outcome variable. In May 2018, a search was run in the PubMed database using the search phrase “(YouTube[Title] and (Educational Measurement[MeSH Terms] or Video Recording[MeSH Terms] OR Information Dissemination[MeSH Terms] or Social Media[MeSH Terms] or YouTube[Other Term])”. This search provided 306 papers. These papers were downloaded into a Microsoft Excel 2017 spreadsheet. From all 306 retrieved papers, the abstract and the title were read.

The inclusion criteria related to the papers read were as follows. First, the studies included in this review were articles written from 2005 to April 2018. Second, the language of the papers was in English, French, German, Portuguese and Spanish. Third, another inclusion criterion was papers that used quality information analysis methodologies to evaluate videos with a quantitative approach.

The exclusion criteria were related to papers that described qualitative analysis like sentiment analysis, YouTube comment analysis papers or editor letters. These papers were excluded because they did not evaluate information quality but user interaction with videos and they were finally deleted from the spreadsheet.

From the included papers, all articles were completely read, recording different items into the spreadsheet. These items were video search design, inclusion criteria methodology related to videos, retrieved metadata and different systems of quality information analysis contained in the videos.

Regarding video search design keyword selection, the use of any technological tool to select key words or search phrases and information retrieval strategies were also included. Related to inclusion criteria methodology, different parameters such as length, number of visualisations and language were also recorded. All variables were recorded into the spreadsheet for further analysis with descriptive statistics.

RESULTS

As YouTube was created in 2005, the temporary line of the analysed papers goes from 2005 to April 2018. As shown by the number of articles published (Fig.), YouTube is increasingly becoming a primary source of analysis, and it is likely that there will be more video content assessment methods in future research. Therefore, it is necessary to establish homogeneous and standardised content assessment systems. Furthermore, for comparison and repetition of research, papers should provide a complete list of analysed videos. Of all reviewed papers, only 19 (10.79 %) provided such a list.

Keyword selection strategy

One drawback in using a social network site like YouTube is that both the user and the video creator can use non-conventional or social tagging instead of a controlled vocabulary. This permits the video creator to freely tag their videos, but also the user can use free vocabulary to retrieve videos. The absence of a controlled vocabulary can cause biases in information retrieval, and therefore searches might not find relevant information or might miss some necessary information.

Because of this type of structure, it is necessary to use different strategies to find appropriate key words that permit searchers to recover relevant and persistent information. Using keyword search tools such as Google Keyword, Google Trends or the autocompletion function (in which YouTube suggests keywords used by users) is enough for a layperson with no research needs to be satisfied with the information search. Therefore, it is assumed that there is no need for a librarian in a research team to design an information search strategy in social networks like YouTube. To understand how information is organised, improve the use of these tools and save research costs, however, it is necessary to have a research-embedded librarian as part of a research team.

Having a research-embedded librarian has many advantages for an expert search of information in specialised databases such as MEDLINE or SCOPUS12 as well as in social media. Only one article (0.56 %) reported the inclusion of a Master’s prepared librarian in the research team.13

Any information search uses a strategy of keyword searches to obtain information. In 157 articles (89.20 %), however, there was no indication whether the keywords had been previously selected or whether they corresponded to established criteria in the research design.

There are different trends in the use of technological tools for keyword selection. The use of Google Trends or Google Insights (n= 12, 6.81 %) was one of the preferred tools to select keywords on YouTube. Currently, Google Trends and Google Insights are the same tool. Google Trends allows the user to graphically analyse the search tendency of a concrete keyword or a set of keywords through time. Other search strategies include a combined keyword search strategy (n= 4, 2.27 %) using newspapers, scientific literature or medical books.14

One of the combined strategies was using together the Google AdWords keyword tool and Mesh (Medical Subject Headings).15 In other cases, other strategies were grouped by the terms selected by patients and students in health (n = 2, 1.13 %).

Two papers with Portuguese authors (1.13 %) used medical descriptors as keywords in searches with DeCS (Health Sciences Descriptors).16-17 Another strategy was to perform several iterations of YouTube searches with different keywords before choosing the definitive keywords (n= 1, 0.56 %).

Finally, other options included using the YouTube autocomplete option in the search box or looking for YouTube channels of trustworthy healthcare organisations such as the Red Cross.18 In addition, some papers noted that videos do not have subject headlines that would be helpful in searching for terms in academic databases.13 No evidence of variation in the terms in videos’ metadata could be found.

Information retrieval strategies

One of the great constraints on video retrieval is dependency on the YouTube algorithm. The algorithm shows results depending on many variables in searches such as the number of views and “like” or “dislike” votes on the videos. Although vocabulary in YouTube is uncontrolled, researchers can obtain large enough video samples to perform content-analysis validations.

Three information retrieval strategies were found in this review. The first was manual retrieval (n= 172, 98.29 %) wherein five cases (2.84 %) implemented the snowball technique.19 The snowball technique on YouTube is done following the algorithm video recommendations20 or by retrieving videos that have not appeared by searching for usual keywords.21 In this way, through the algorithm recommendations, videos are re-extracted until the recommendations are repeated in search results. This means that there is a dependency on the recommendations of the algorithm to recover, visualize and analyse following videos.4

The second technique was the use of the YouTube Application Programming Interface (API; n= 3, 1.70 %). A programmer is needed to use the YouTube API, but doing so enables the user to automate content recovery without depending on the algorithm. It is not possible to use the snowball technique with the YouTube API, but it is possible to download video metadata such as the title, tags, descriptions or URL.22 The YouTube API permits access to the channel metrics if the publisher grants permission through the API.

The third information-retrieval technique found was the use of TOR Network (n= 1, 0.56 %) to avoid dependencies on the YouTube algorithm and anonymous browsing.23Anonymous searches were also done by eliminating cookies, using a new computer or browsing using the browser’s incognito mode. This technique excludes any personalisation effects, lead bias and problems with repeatability.

Selection of videos and data collection

YouTube allows the use of sorting and filtering results once the search phrase is entered. This filter is divided into date of ascent, type, duration time characteristics, sorting and video duration.24 Sorting by relevance was the option most often used (n= 79, 44.88 %). Sorting by views was second (n= 33, 18.75 %), but it is appropriate only if the number of views is high enough (i.e. 1,000 views). Researchers also sorted by combining views and relevance (n= 25, 14.20 %) to avoid biased results and to obtain the greatest number of videos.25 Other authors used another type of sorting technique (n= 8, 4.54 %). Finally, other authors didn’t use any ordering method (n= 32, 18.18 %).

Another topic discussed is the variety of videos under the inclusion and exclusion criteria of duration time and views. Twenty-three studies (n= 23, 13.06 %) considered video duration time or number of views (table 1). Three studies chose a duration time of less than 4 minutes17,26,27) while six studies (3.40 %) reported that the selected videos were less than 10 minutes long.

There is an exception in table 1 where one study included videos of less than 7 minutes and 150 views or less. The duration of videos with medical content predominates videos between 10 and 20 minutes.

Regarding inclusion or exclusion criteria, there is no exact criterion that permits the definition of a parameter to choose videos with a particular number of views. This parameter is very scattered. As shown in table 2, the number of views as a criterion is shown in just six studies (3.40 %). In fact, there is no criterion of certainty that indicates how many views were required for a video to be included in a study. There are many parameters in which the views were counted because YouTube counts the quality views, but there are no established criteria for how a view is counted regarding viewing time. This means that it might make sense to include videos with fewer views in any research study.

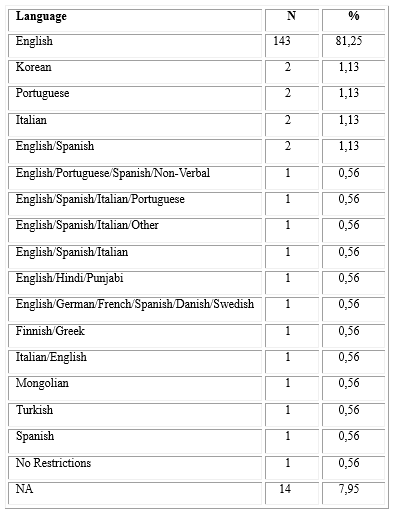

Language was another criterion of inclusion or exclusion. The main language of choice for the videos was English, and there is little variety in the analysis of videos in other languages. Nineteen studies (10.79 %) chose a different language such as Finnish, Greek,28 Mongolian,29 Korean30 and Italian.31 Because working teams were international, there were a combination of languages (table 3). Fourteen studies (7.95 %) did not indicate in which language the videos were published, but we can infer that it was English because of other information found in the paper. These data indicate that there is a need for content analysis in languages other than English.

Regarding the number of analysed videos, the average was 153.09 with a median of 89. Therefore, it is possible to approximate that a video evaluation analysis ideally should have a sample of 90 videos. Future research analysis should validate this number.

An issue requiring further discussion is the number of search result pages from which videos are collected. Some authors indicated that users usually look at the first three pages;32 others say Google users look at the first page results,33) and others analysed the first 10 pages. Four studies (2.2 %) stated that the result position was saved. This option it does not seem to be a good idea because results could change in the next search using the same term.

YouTube metadata

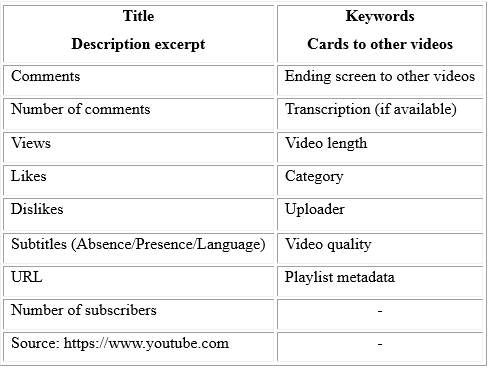

YouTube, like other social network sites, captures different metadata and public metrics. Metadata has varied since YouTube was created, and each study captured different metadata, depending on what was evaluated. Table 4 presents the metadata and metrics that can be captured on YouTube.

It is possible to retrieve key words from the videos, but only by using third-party software. Just one study retrieved keywords from videos.15) Another series of data that can be captured initially is the original language of the video, but language is a criterion for inclusion or exclusion in the reviewed studies. In addition, it is possible to publish videos on YouTube with titles and descriptions in a language different from the original language in the video; for example, one can publish a video in English with the title and description in German. Therefore, the same video can appear in search results with the title and description in multiple languages.

Additional data can be captured in the “About” page for the uploader’s channel including the total number of channel displays, date of the channel’s creation and the channel’s country of origin. Therefore, a search for a channel name in the YouTube search engine reveals the number of published videos and the playlist created. Playlists are interesting to consider because they appear in the search results and usually have similar content. Only one article reported considering the playlist,34 but only as an exclusion criterion. Table 5 presents the captured metadata from all articles.

Content quality evaluation methodologies

The literature mentions many video quality information evaluation methodologies; these often have been adapted from written information evaluations. To evaluate a certain medical technique, it is possible to use a specific standard or guide. To assess video content quality, however, there are different aspects that should be also considered in future research.

In video content analysis, it is necessary to not only assess the quality of the information but also the technical quality of the video. Online video content analysis should evaluate the sound, resolution and quality of the video as a whole or frame by frame. There are no standards or consistency in the methods that facilitate this assessment, however. Methods exist to evaluate the quality of medical information, but a standardized system of video and pedagogical quality must be defined for future research.

An element that seems unclear is the definition of an instructional video versus an educational video. An instructional video is one that shows you how to do something or what to do in certain situations.35 An educational video into the health field is one that patients or doctors view to learn about a certain aspect (e.g. pain, diseases, type of surgery). Borders between instructional videos and educational videos are too weak, however. Forty-nine studies (27.84 %) scored educational videos, and one (0.56 %) scored instructional videos. The rest of the reviewed articles evaluated issues such as information accuracy or YouTube as a source of information.

In addition, given the social scope of YouTube, defining professional-style videos as those with commercial gain intent or amateur-style videos as short homemade ones is not a good approach.36 Current technology enables people to create professional-looking videos, making it difficult to differentiate professional and amateur videos. This might not be true in the health arena, but it is true in other disciplines.

Different ways exist to evaluate the quality content of the videos, with 62 papers (35.22 %) in the sample using different scoring methods to assess videos in terms of the quality of information and some also assessing technical quality. These scoring methods, however, were adapted from guidelines that assess written information, as there is no standard of evaluation.

The most used methods were Global Quality Systems (GQS; n= 14, 7.95 %), the Medical Video Rating System (MVRS; n= 3, 1.70 %) and the Suitability Assessment of Material (SAM; n= 1, 0.56 %).

GQS uses a 5-point Likert scale to evaluate quality information. It does not seem to be a good indicator; however, as it this system is designed to evaluate the quality of information in websites.32) The MVRS system is divided into three parts: technical quality (light, sound, resolution, angle and duration), diagnostic accuracy and efficacy as a clinical example.36,37) SAM enables the user to evaluate the quality and materials of the videos.18

In addition, other authors have created their own systems to evaluate video information quality. For instance, different authors proposed a scoring system related to the audio and technical quality of the video as well as a score from the educational point of view on audio teaching quality with scores of 0, 0.5 and 1.14,38

Other authors propose a system to evaluate educational videos that examines targeting content, technical, authority and pedagogy parameters with scores from 0 to 2 without half scores.39 In addition, one group of authors used their own system to evaluate videos but did not consider the technical qualities of the videos. On the other hand, researchers adapted HONcode to evaluate the video information quality.40 Another type of assessment was used to evaluate the quality of information as useful or misleading, indicating the presence or absence of information in the video.41,42,43,44

Finally, some authors reported that the video evaluation systems are inadequate, noting issues such as the absence of standards for video evaluation,43,45 absence of indicators and tools to evaluate information on YouTube videos,46 and absence of tools to assess the quality of the videos in health information.47 In addition, quality indicators in patient education videos on YouTube are inconsistently adopted.48

Regarding YouTube as a source of accurate information, 86 studies (48.86 %) considered it to be a poor source of medical information based on their research topic. They reported that information they were seeking for further analysis was inaccurate or of poor quality. They also reported that information was lost or not catered to according to appropriate quality requirements and that, in a qualitative way, the videos did not provide appropriate information.

Therefore, 49 (27.84 %) papers reported that YouTube is a good source of information and pointed out that information within the videos was accurate or appropriate at an instructional or educational level. The remainder of the articles (n= 42, 23.86 %) either did not report on information accuracy or assessed other issues.

Among the reports on the accuracy of information, comments about the quality and information control indicated that the content of the videos was incorrect, reflected issues that were “not relevant”,49 and was “unsuitable as an educational tool” (16) or “difficult to verify authenticity”. (50

On the other hand, studies indicated that the content found on the videos were “content useful”.2 It must be noted, however, that these reports are on different subjects and specialties in health. Therefore, depending on the specialty from which they were analysed, the videos could be seen as accurate, of good quality and useful - or as inaccurate.

DISCUSSION

Scientific studies must be described so that they can be repeated and the results can be replicated. In the important area of health information videos on YouTube, several issues lead to a situation in which the quality of the research remains low. In most cases, the studies cannot be repeated as the complete list of videos is not provided.

As a social network site, YouTube presents different questions to research like video uploading frequencies and social tagging. Yet researchers have tackled keywords, search strategies and the variety of evaluation methods for content analysis and algorithm dependence.51 Therefore, there is a lack of information competence in part of the study designs which is reflected in the reviewed literature. Moreover, there are some incomplete descriptions of study design, which is an area on which to focus.

Although YouTube is a very good primary information source, uploading frequencies and deleting frequencies is an issue to be faced. During research, new videos might appear, and existing ones might disappear, affecting search results. Thus, unless videos are downloaded during the retrieval phase, some information can be gained while other information is lost.

Regarding information competence, authors are unaware of the need to describe exactly the search terms and parameters to describe their results.52) They are also unaware of the personalisation and dynamics of the recommendation algorithm that leads to a situation in which YouTube delivers very different results for the same query, even at the same time. This means that, in most cases, the analyses cannot be repeated.

According to the review, having a research librarian on the research team to design the keyword search strategies and retrieval methodologies could improve research.12 Also, researchers should consider using data mining tools to download videos and their metadata or anonymous browsing to retrieve videos; both would help avoid dependency on YouTube algorithms, which is crucial for the possibility of repeating an analysis.34

Regarding content analysis and related to the data obtained in the review, it seems that research in content analysis is trustworthy when analysing 90 videos with a duration of 10-20 minutes. More research is needed in this regard, however, as specialists in the same field can find different results.

There are some limitations in this study. First, the papers analysed were about quality information in YouTube video, and it did not review articles with sentiment analysis. Although it was a large sample compared to other reviews, is likely that other articles were missed. Second, the PubMed database can find papers that are not indexed by MeSH descriptors or keywords. These kinds of articles index the author, paper title or DOI. Because of that, is also likely that other evaluation methodologies did not appear in the search results. Third, educational measurement descriptor in MeSH refers to the assessing of academic or educational achievement and video is excluded as a learning resource into teaching strategies.

There are many evaluation methodologies in quality information content analysis. It seems necessary to create a homogeneous and standardized video evaluation system in a consensual manner, or the different methodologies could lead to different results even in the same area of health. Also, quality is a subjective term that should be quantified in a video evaluation system, and issues such as sound or clarity of images do not appear in the literature when evaluating a video. In future work, a video evaluation system will be proposed to address these issues.

Finally, special attention to keyword search strategy, retrieval methodologies and avoiding algorithm dependencies should be considered in future research.

Conclusion

YouTube is a primary source of information for analysis, but as stated in this literature review, there are many issues to be solved. An online video evaluation system is necessary. It should be standardised with the right indicators. Although the presence of metadata has been used to evaluate videos, other indicators such as sound quality, image quality or information accuracy is needed in a field like health. Indicators on the number of views are also required, but this is the least reliable parameter.

It is also necessary to generate specific websites in health where content is controlled, for example by a librarian as in the case of https://av.tib.eu, to avoid incorrect publications with elements such as the absence of information or questions such as clickbait. Not only will this benefit research, but it will make issues such as controlled vocabulary in the retrieval of information less of a stumbling block.